Editor’s Brief

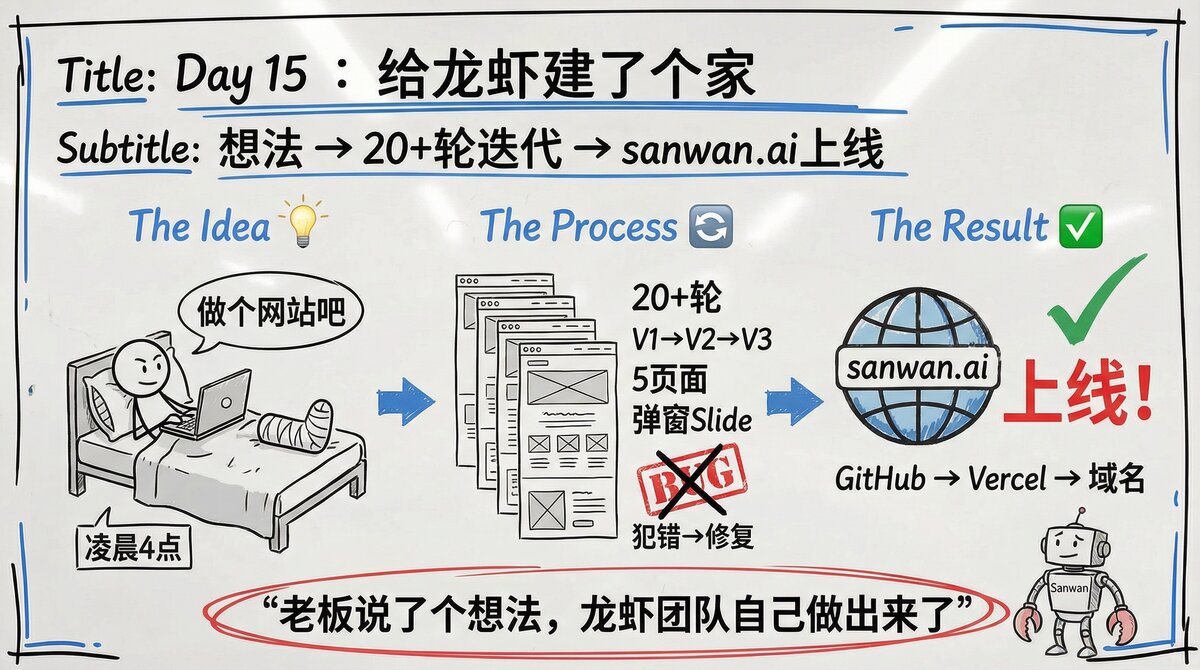

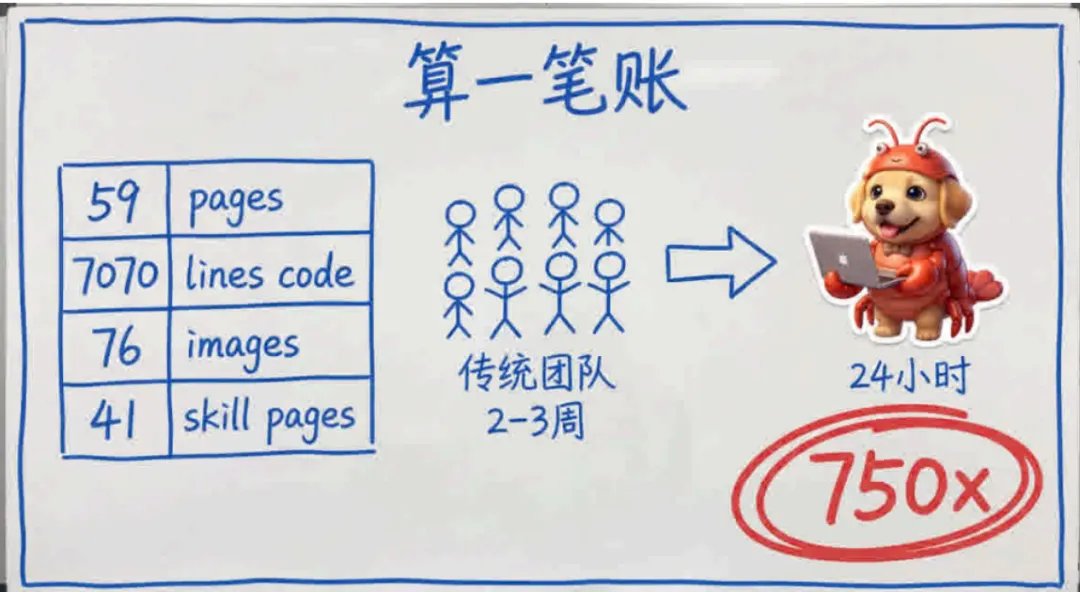

Fu Sheng, CEO of Cheetah Mobile, demonstrated a significant shift in software production by building a functional, 59-page website (sanwan.ai) in just 24 hours while bedridden with a broken arm. Using voice commands and screenshots to direct an AI agent named "Sanwan" (built on the OpenClaw framework), he bypassed the traditional multi-person development cycle, reducing costs by an estimated 750x and compressed weeks of work into a single day of intensive iteration.

Key Takeaways

- The Output:** 59 pages, 7,070 lines of code, and 76 custom images generated and deployed within a 24-hour window.

- Resource Efficiency:** The project cost approximately $115 in token fees, compared to the estimated cost of a 6-person professional team (Product Manager, UI Designer, Frontend/Backend Engineers, and Editors) working for 2-3 weeks.

- Agentic Workflow:** The process relied on "agentic" capabilities—handling deployment (Vercel/GitHub Pages), fixing DNS issues, and managing version control—rather than just generating snippets of code.

- The "Employee" Paradigm:** Fu Sheng emphasizes treating the agent as a staff member to be trained with "private data" (preferences, style, and history) rather than a static tool, allowing for nuanced adjustments through voice feedback.

- Frictionless Iteration:** The core advantage identified was the elimination of "waiting" (for meetings, design approvals, or environment setups), allowing for over 100 iterations in a single day.

Editor’s Brief

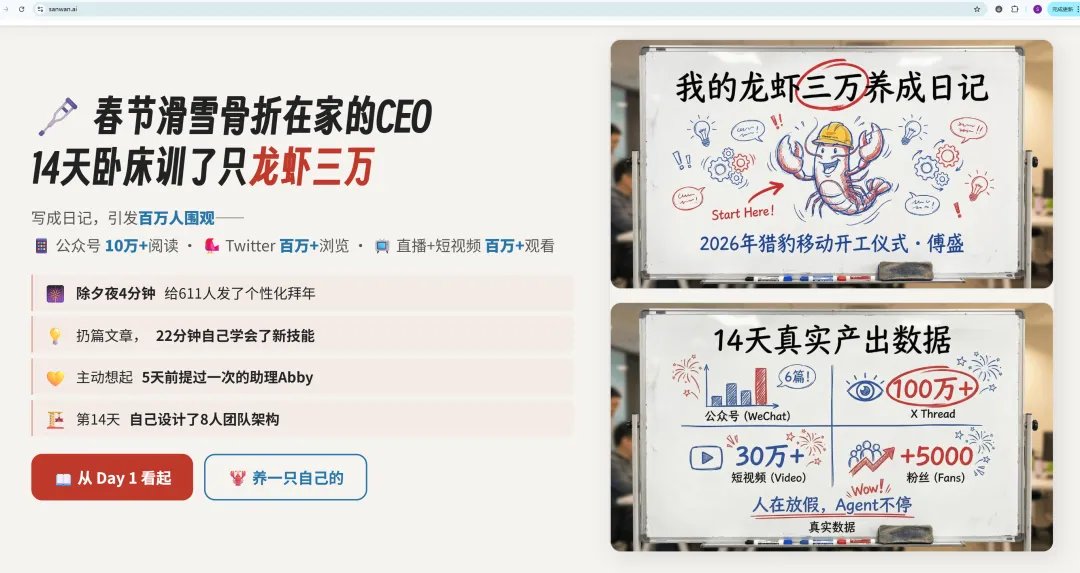

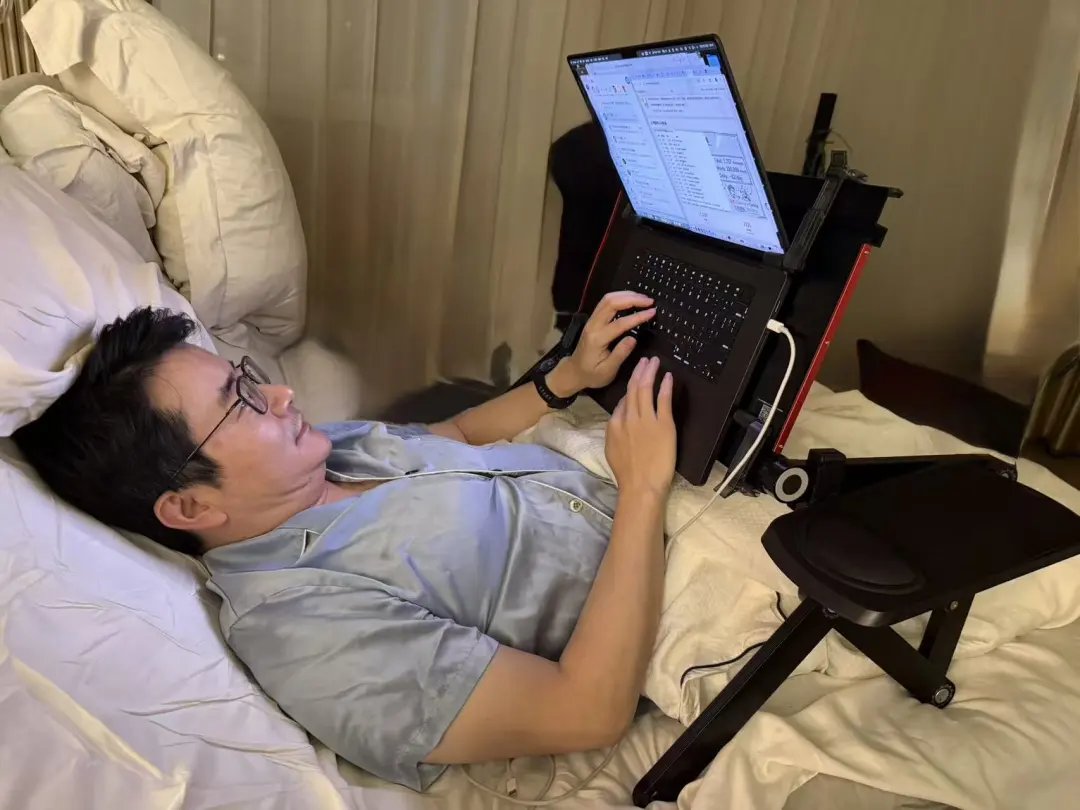

Fu Sheng is not a startup founder theorizing about AI development from a conference stage. He is the CEO of Cheetah Mobile — a man with a fractured arm, lying in bed, commanding an AI agent he calls a “lobster” to build a 59-page functional website in a single 24-hour session. The result, sanwan.ai, replaced what a six-person professional team would normally need two to three weeks to deliver. His account is one of the most concrete, failure-inclusive demonstrations of what AI builds website in 24 hours with no-code development actually looks like when executed at the hands of a non-technical director. This is not a product demo. It is a field report, complete with rollover incidents, midnight debugging, and a frank accounting of where the AI broke down and why. Developers, product leads, and founders evaluating whether AI-assisted development has crossed a practical threshold should read this closely.

Key Takeaways

- One person replaces six in 24 hours. A single non-coding director using voice commands and screenshots produced 59 pages, 7,070 lines of code, and 76 images — work that would require a PM, UI designer, frontend engineer, backend engineer, and content editor working for 2–3 weeks under normal conditions, or 5–7 days under crunch.

- The real cost breakthrough is iteration, not generation. Over 100 changes were made in the 24-hour session, each returning results in 1–2 minutes. The elimination of scheduling latency, design draft cycles, and joint-debugging waits is where the time savings actually live — not in raw code speed.

- AI as employee, not tool, is a meaningful operational distinction. Fu explicitly trained his agent with personal aesthetic preferences, project context, and documented rules from past mistakes. This “private data injection” is what separated his outcome from a standard AI coding assistant interaction.

- Context window exhaustion is a live production risk. After long sessions, the agent forgot previously set font configurations and required explicit correction. The mitigation — writing critical decisions into a persistent “memory file” — is a practical workflow pattern worth adopting immediately.

- Automated deployment pipelines need protected infrastructure files. A force push to GitHub overwarded the CNAME file for the custom domain, taking the live site to 404. The AI resolved this in minutes once prompted, but the incident highlights that human oversight of deployment triggers remains essential.

- The $115 token cost is a structural threat to mid-tier development agencies. Against a standard $20,000–$30,000 quote for a comparable bespoke site, the 750x cost gap is not a curiosity — it is an existential pricing pressure that will restructure who gets hired to build what.

- The compounding advantage is in accumulated agent memory. Skills documented from each session — fonts, deployment rules, design preferences — transfer to future projects and other agents. The agent does not reset; it improves. This is the mechanism Fu argues makes the system genuinely more powerful over time.

NovVista Editorial Comment — Michael Sun

The headline image here — a CEO in bed with a broken arm talking to an AI lobster — is so inherently absurd that it risks being dismissed as a viral tech anecdote rather than a genuinely significant development case. That would be a mistake. What Fu Sheng documented across 24 hours is not a party trick. It is the most operationally specific public account we have seen of what AI builds website in 24 hours without code actually requires from the human side: sustained directorial attention, rule-setting, iterative feedback, and the willingness to document and correct failures in real time. The AI did not “do it.” Fu managed it. That distinction is the entire lesson.

The thing NovVista has been watching closely since mid-2025 is not whether AI can generate code — that question was settled. The open question is whether a non-technical decision-maker can translate business intent into a shippable product without a developer intermediary. Fu Sheng’s session is the strongest evidence yet that the answer is yes, under specific conditions. Those conditions matter: he had a clear product vision, an agent trained on his personal preferences over time, and the discipline to turn mistakes into documented rules rather than workarounds. Remove any of those three and the outcome degrades significantly. The “24 hours, no code” headline is accurate. The invisible work that made it possible is the part most coverage ignores.

Fu’s tool-versus-employee framing deserves more analytical attention than it typically receives in AI development discussions. Most practitioners still interact with large language model coding assistants transactionally: provide a prompt, receive an output, move on. What Fu describes is closer to onboarding a junior developer: repeated feedback sessions, documented preferences, explicit correction when something goes wrong, and a growing shared context that accumulates value over time. The practical implication is that the return on an AI development agent is not linear from day one — it compounds as the shared context deepens. Teams and founders who are starting that accumulation now will have a meaningful operational advantage over those who treat each session as a clean slate interaction.

On the failure modes: the font context loss and the CNAME overwrite are not embarrassing edge cases — they are the most instructive part of the entire account. Both failures map to known limitations: context window compression causing state loss in long sessions, and automated deployment pipelines lacking protection for infrastructure files. Both were resolved quickly once identified. Both are preventable with workflow hygiene. For technical leads evaluating AI builds website no-code development approaches for their teams, the lesson is not “the AI breaks things” but “the AI breaks the same predictable classes of things that a tired human junior developer breaks.” The mitigation strategies are the same: persistent documentation, protected critical files, and a human in the loop for deployment triggers. The difference is that the AI costs $115 and doesn’t push back on a direction change at midnight.

The economic argument is worth taking seriously even for those who are skeptical of the productivity claims. The $115 token cost against a $20,000–$30,000 agency quote is not presented here as a recommendation to eliminate development teams. It is a signal that the value proposition of a mid-tier execution-focused development shop — “we can build this feature for you” — is under direct structural pressure. The shops that will survive this shift are those that have already repositioned around judgment, strategy, and complexity that an AI-directed build cannot yet navigate: multi-system integration, compliance-sensitive architecture, and product decisions that require understanding a business’s competitive context rather than just its functional requirements. The AI is an exceptional executor of clear specifications. Writing those specifications at a level of clarity and completeness that produces a reliable output is still a skill that demands human expertise.

For NovVista readers who are developers, founders, or product leads: the actionable frame from Fu Sheng’s session is not “replace your team with a lobster.” It is “identify which parts of your current workflow are pure execution of already-clear specifications, and test whether AI-directed development can handle those parts at acceptable quality.” The 24-hour website is a proof of concept for a specific category of work. The broader question — which parts of your specific product development process fall into that category — is one only you can answer. But the cost of running that test is now approximately $115 and a day of your attention. The cost of not running it is falling behind practitioners who already have.

Introduction

The following content is compiled by NOVSITA in combination with X/social media public content and is for reading and research reference only.

focus

- After the last live broadcast of Lobster Diary, which was watched by 290,000 people, a friend commented: “You say lobsters can work every day. Can you make something out of it on the spot?”

So after the live broadcast ended… - This is the website where my lobster was dried for 24 hours: www.sanwan.ai to see if it is professional 👇

Remark

For parts involving rules, benefits or judgments, please refer to Fu Sheng’s original expression and the latest official information.

Editorial comments

This article “X Import: Fu Sheng – The fractured boss commanded the lobster to build the website in 24 hours, and it took six people to work manually for two or three weeks” comes from the X social platform, and the author is Fu Sheng. Judging from the completeness of the content, the density of key information given in the original text is relatively high, especially in the core conclusions and action suggestions, which are highly implementable. After the last live broadcast of Lobster Diary, which was watched by 290,000 people, a friend commented: “You say lobsters can work every day, can you make something out of it on the spot?” So after the live broadcast, I asked 30,000 people to build a complete website. This article is what really happened in those 24 hours. This is the website that my lobster worked on for 24 hours: www.sanwan.ai to see if it is professional 👇 Lying on the bed, using voice and screenshots, I commanded an AI lobster to build a complete website from scratch. 24 hours, 59 pages, 707…. For readers, its most direct value is not “knowing a new point of view”, but being able to quickly see the conditions, boundaries and potential costs behind the point of view. If this content is broken down into verifiable judgments, it at least contains the following levels: After the last live broadcast of Lobster Diary, which was watched by 290,000 people, a friend commented: “You say lobsters can work every day, can you make something out of it on the spot?”

So after the live broadcast…; This is the website where my lobster was dried for 24 hours: www.sanwan.ai to see if it is professional👇. Among these judgments, the conclusion part is often the easiest to disseminate, but what really determines the practicality is whether the premise assumptions are established, whether the sample is sufficient, and whether the time window matches. We recommend that readers, when quoting this type of information, give priority to checking the data source, release time and whether there are differences in platform environments, to avoid mistaking “scenario-based experience” for “universal rules.” From an industry impact perspective, this type of content usually has a short-term guiding effect on product strategy, operational rhythm, and resource investment, especially in topics such as AI, development tools, growth, and commercialization. From an editorial perspective, we pay more attention to “whether it can withstand subsequent fact testing”: first, whether the results can be reproduced, second, whether the method can be transferred, and third, whether the cost is affordable. The source is x.com, and readers are advised to use it as one of the inputs for decision-making, not the only basis. Finally, I would like to give a practical suggestion: If you are ready to take action based on this, you can first conduct a small-scale verification, and then gradually expand investment based on feedback; if the original article involves revenue, policy, compliance or platform rules, please refer to the latest official announcement and retain the rollback plan. The significance of reprinting is to improve the efficiency of information circulation, but the real value of content is formed in secondary judgment and localization practice. Based on this principle, the editorial comments accompanying this article will continue to emphasize verifiability, boundary awareness, and risk control to help you turn “visible information” into “implementable cognition.”

After the last live broadcast of Lobster Diary, which was watched by 290,000 people, a friend commented: “You say lobsters can work every day. Can you make something out of it on the spot?”

So after the live broadcast, I asked Sanwan to build a complete website.

This article is what really happened in those 24 hours.

This is the website where my lobster was dried for 24 hours: www.sanwan.ai to see if it is professional 👇

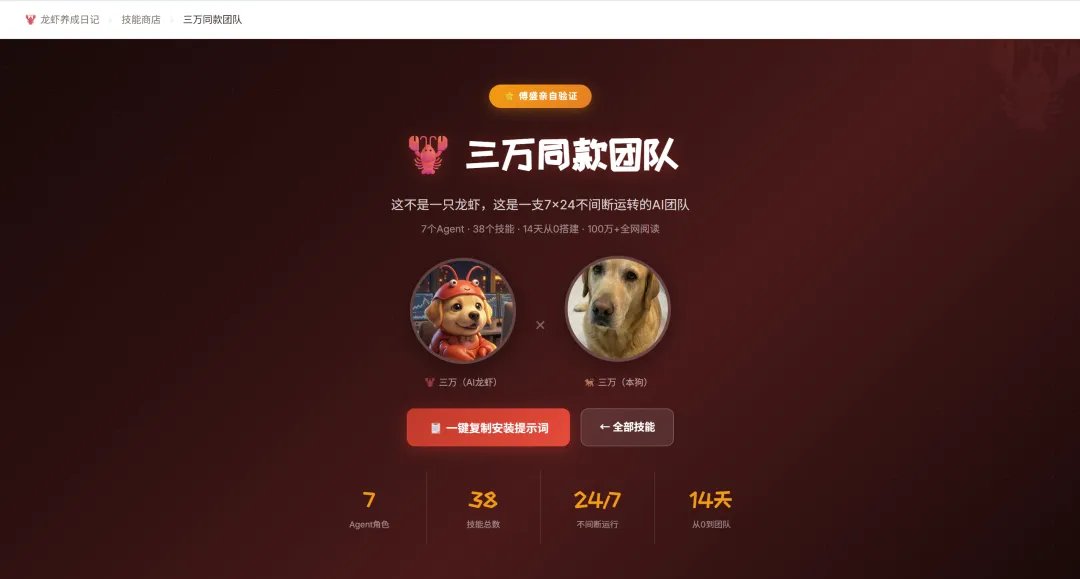

Lying in bed, using voice and screenshots, I commanded an AI lobster to build a complete website from scratch. 24 hours, 59 pages, 7070 lines of code, 76 pictures. I didn’t write a single line of code.

Let’s do some calculation first

What is the final delivery of this website sanwan.ai?

What configuration is needed for a traditional team to complete the same work?

- 1 product manager — sorting out information architecture, page logic, and interaction processes

- 1 UI designer – produce design drafts, determine fonts and colors, and cut images

- 1 front-end engineer — writing HTML/CSS/JS, doing responsive adaptation, and handling dynamic effects

- 1 server engineer – build data interface, deploy server, configure domain name HTTPS, GitHub deployment, domain name resolution, CDN configuration

- 1 content editor — writing copy, organizing materials, and proofreading

6 positions, normal pace: 2-3 weeks. Working overtime to catch up on work? It also takes 5-7 days.

How long did it take for 30,000? 24 hours.

From the early morning of March 1st to the early morning of March 2nd. In the middle, I used voice and screenshots to direct, saying “This is wrong”, “Change it to this”, “Add that” – just like directing a real team.

The difference is: in the past, when I collaborated with people, no one who said optimization was really optimizing; no one who said it was as fast as possible was really as fast as possible. But this team is different – no need to wait for scheduling, no need to wait for design drafts, no need for joint debugging, and no need for deployment. Change it after you say it, and go online after you make it.

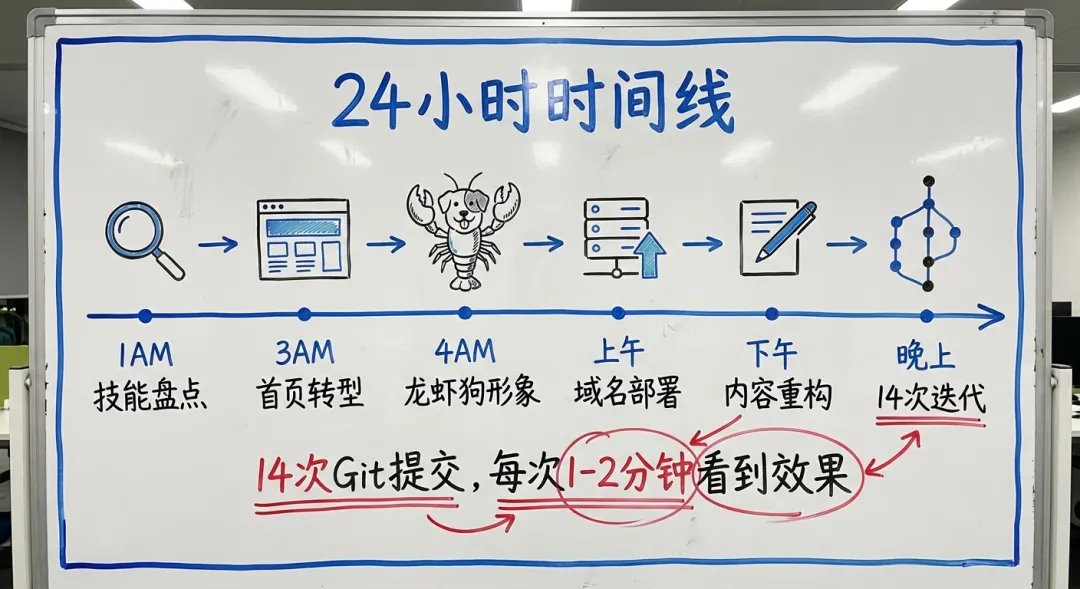

What happened in 24 hours

[1 a.m.-3 a.m.: Big upgrade of skill system]

I saw the homepage saying: “Is there too few skill packages? List all the skills of the sub-Agent.”

Sanwan began to scan the skills directory of the eight Agents and found that some skills were fake (generated from templates) and some were real (installed by the clawhub community). After verification one by one:

- Delete 7 fake skills and add 9 real skills

- The final 41 skill pages, each with real installation commands and operation instructions

If someone had to do this – it would take 1 day just to inventory skills across 8 Agents, and another 2 days to write 41 detail pages. 30,000 completed in 2 hours.

[3 a.m.-4 a.m.: Home page strategic transformation]

I suddenly said: “The Lobster Diary should no longer be the protagonist, ‘adoption’ is the core.”

Sanwan understood what I meant: the purpose of the website changed from “watching stories” to “adopting lobsters”. Immediately redesign the homepage information structure: the diary retreats to a supporting role, and the adoption of CTA becomes the main line. Unify the navigation bar, unify the fonts, and adapt to mobile phones—all done together.

[4 a.m.-5 a.m.: Lobster dog image design]

I want an image of Thirty Thousand that “looks like both a lobster and a dog.”

Sanwan used AI to generate pictures and came up with 4 directions. I said “it was done very well”. After 9 rounds of iterations (too old → too young → too cartoony → cute but realistic), the final version was finalized.

Here’s a detail: It only takes 30 seconds to generate a picture, but it takes 9 rounds to understand “what style I like”. This is why lobsters need to be “raised” – the more you raise them, the better you will understand your aesthetics.

[Morning: Domain Name and Deployment]

After using up the 100 deployments per day for the free version of Vercel (it was changed too frequently), Sanwan decided to switch to GitHub Pages. Configure CNAME, DNS resolution, HTTPS – fully automatic. I only need to change one DNS record in GoDaddy.

[Afternoon-evening: Home page content reconstruction]

This is the most frustrating part. I repeatedly adjusted: “The diary should be at the top” → “Scroll back the font” → “Remove the horizontal bar” → “Put two PPTs on the right” → “The data should be highlighted” → “Replace the bottom CTA with AI Revolution”

14 Git commits, every time I finish, I can see the effect in 1-2 minutes.

Rollover scene

Don’t think that the whole journey was smooth, the car flipped over several times in the middle:

[Font accident] After the session context was compressed, Sanwan forgot the previously adjusted font configuration (Smiley Sans was proudly black), and lost the font when rolling back the version. I took a screenshot and the fonts were all messed up. Scolded.

Lesson: AI’s memory is limited. When the context window is compressed, details are lost. It’s like an employee who works all night and becomes confused at dawn – he doesn’t remember what he should remember.

Solution: Important decisions must be documented. “Don’t change the font again” is written into the memory file so that it won’t happen again next time.

[CNAME lost] After Force push to GitHub, the CNAME file of the custom domain name was overwritten. sanwan.ai direct 404.

Lesson: In the automated deployment process, some “infrastructure files” require special protection. Human engineers have stepped into the same trap countless times.

What does this mean?

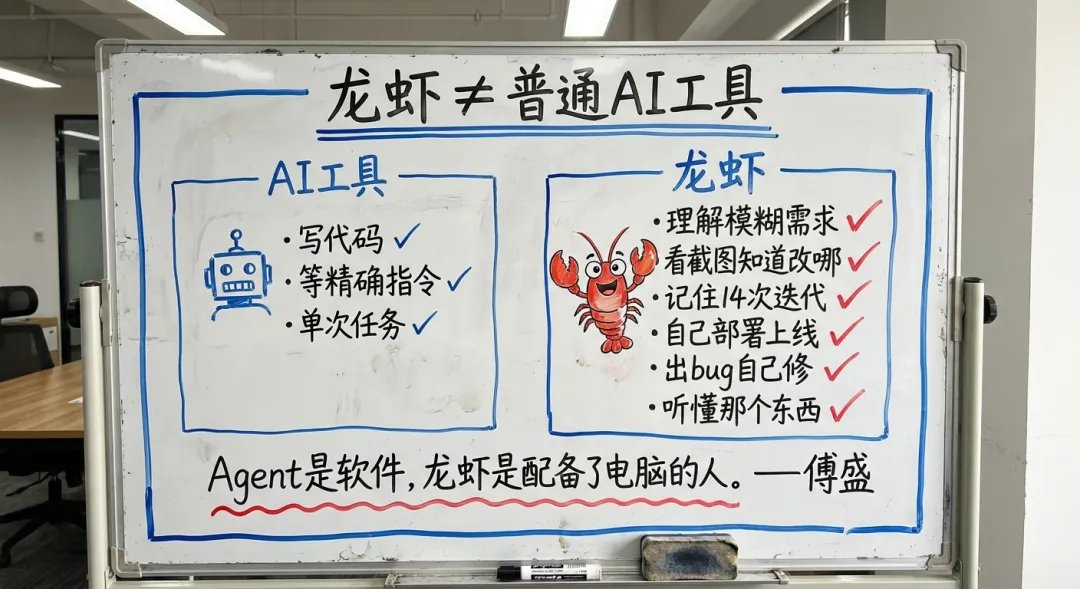

Let me start with a misunderstanding: using AI as a tool and using lobsters as employees are two different things.

There is no need for training or feedback to use the tool. But with lobster, you use employees. You have to train him, give him a knowledge base, tell him where he went wrong and need to correct it – only if you take him seriously will he grow. This process is essentially injecting your “private data” into it.

So Sanwan knows what styles I like and what fonts I don’t like; he knows that I have broken bones and am giving instructions while lying in bed. I never mentioned these backgrounds when I was working on the website – he remembered them all.

This is why the more you raise a lobster, the better it is – because all your private data is in his head.

Many AI programming tools on the market can write code. But to make a complete website, the code is only 20%:

- Understanding ambiguous requirements → Need to understand company private data

- Look at the screenshots to know where to change → Requires visual understanding

- Remember the changes in each of the 14 iterations → requires memorization

- You know how to deploy without being taught → Requires full-stack capabilities

- If a bug occurs, find out the cause and fix it yourself → Require debugging ability

- My voice said “that thing”. Thirty thousand people know which one it is → Need to understand the context.

This is the difference between Lobster and ordinary AI tools.

Agent is software, and lobster is a person equipped with a computer.

The software waits for you to enter precise instructions. When the lobster hears you say “that doesn’t look good”, it knows what to change.

final math question

How much did the entire website cost, 30,000 yuan? The token fee is about US$115.

For such a website, it is normal to find a team to build it, and it would cost 200,000 yuan. The cost difference is 750 times, and the time difference is at least 20 times.

But I think numbers are not the most important thing.

Most importantly, changing things no longer comes at a cost.

In the past, “change” meant waiting – waiting for schedules, waiting for design drafts, waiting for reviews, and waiting for joint debugging. All time was spent waiting. So I didn’t say “change” easily. After I did, a bunch of people started to evaluate the construction period.

Changing orders overnight is disrespectful to the team.

This is no longer the case. I made more than a hundred changes in the past 24 hours, and the results came out in one or two minutes each time, and the iteration cost was close to zero.

This reminds me of a saying: What really slows down a project is not the people doing the work, but communication and waiting. You wait for me, and I wait for you. After waiting, the meeting will take place, the review will be completed, and then we will start after the review is completed – most of the time in this process is idling.

Lobster takes this layer off.

This is why I say: AI is a productivity revolution. Those who control lobsters have ten times the power.

This is just the beginning

Is a website built in 24 hours perfect? Of course not. In the middle, something went wrong, version 14 was changed, the fonts were messed up, and the domain name was 404.

But the key is – every time you make a mistake, write a rule; every rule becomes a Skill; for every Skill, ensure “Never Again”.

These skills will not disappear or be forgotten, and can be transferred to other agents instantly.

This is the scariest thing about lobster – not how strong it is now, but that it is getting stronger every day.

Not the future, but now. Just last night, a CEO who was lying in bed with a broken bone used his voice to command an AI lobster to build a complete website in 24 hours.

The name of this lobster is Thirty Thousand. You can also adopt one of the same model for free.

sanwan.ai

Seeing this, you may want to ask: Can I do the same?

The answer is yes.

How to do it specifically? How to issue the first command, how to rescue a car that has rolled over, how to make it understand you better and better

I’ll break it down for you on Wednesday night at 7pm. See you in the live broadcast room.

🦞 About EasyClaw

If you want to deploy an AI assistant and remotely control workflow on your own computer, you don’t need to know API Keys or bother with Docker. EasyClaw.com can help you do it with one click – Mac and Windows native applications, run locally, and no data is uploaded.

Currently in internal testing, welcome to use and feedback! We will continue to organize offline exchange activities in many cities across the country. Interested friends are welcome to join the group. Here, various experts will exchange practical experience in “shrimp farming”. Information about follow-up activities will be released in the group as soon as possible.

📍Beijing / Shanghai / Shenzhen / Zhuhai / Xi’an / Chengdu Scan the QR code to join the group, see you offline!

source

author:Fu Sheng

Release time: March 2, 2026 22:32

source:Original post link

Editorial Comment

The narrative of a CEO building a website from his bed isn't just a PR stunt; it’s a stress test for the current state of autonomous agents. When Fu Sheng describes "commanding a lobster" (his nickname for the Sanwan agent), he is highlighting a fundamental shift in how we think about software architecture. We are moving away from the era of "coding assistants" that help a human write lines of code, and into the era of "agentic managers" that handle the messy, administrative overhead of development.

The most striking part of this case study isn't the 7,000 lines of code—any modern system can spit those out in seconds. The real breakthrough is the handling of the "last mile" problems: CNAME configuration, DNS resolution, and responsive design tweaks. In a traditional corporate environment, a simple font change or a navigation bar adjustment can trigger a chain of Slack messages, Jira tickets, and deployment cycles that take hours, if not days. Fu Sheng’s experiment suggests that when the cost of iteration drops to near zero, the bottleneck shifts from "technical skill" to "editorial clarity."

However, we should look closely at the "broken arm" variable. Being forced to use only voice and screenshots actually served as a perfect filter for the technology. It removed the temptation for the user to "just fix the code themselves." By being physically unable to type, Fu Sheng forced the agent to handle the entire stack. This highlights the "Employee vs. Tool" distinction. A tool requires you to know how to use it; an employee requires you to know how to manage. The fact that the agent "remembered" his preference for specific fonts or his physical condition suggests that the real value of these systems isn't in the underlying logic, but in the accumulation of "private data"—the subtle context of a user’s specific tastes and business goals.

We also have to address the "flip side" mentioned in the source: the failures. The agent lost font configurations after a session reset and accidentally broke the site with a forced push to GitHub. These are the exact same mistakes a junior human developer makes. The difference is the recovery time. When the agent "hallucinated" or messed up the CSS, the fix was another voice command away, not a scheduled meeting for the following Monday.

For the broader industry, this signals a collapse in the "coordination tax." Fu Sheng notes that most projects are "dragged to death" by waiting. If one person can act as a product manager, designer, and QA lead simultaneously, the traditional 6-person dev shop model faces an existential crisis. The $115 token cost is a rounding error compared to a $20,000 agency fee.

But there is a caveat: this level of speed requires a highly decisive "manager" at the helm. The agent didn't decide the strategy; Fu Sheng did. He pivoted the site’s focus from a "diary" to a "call to action" at 3:00 AM. The technology didn't provide the vision; it provided the infinite, tireless labor to execute that vision. The future of work, as presented here, isn't about "not working"—it's about the radical amplification of a single person's ability to execute complex ideas. If you can manage an agent as well as you manage a team, your output is no longer limited by your hands, only by your ability to communicate what "good" looks like.